In 2026, every AI product calls itself something. Chatbots are rebranded as “agents.” Agents are sold as “copilots.” Copilots become “AI employees.” Meanwhile, the actual differences between these categories, the ones that affect what the software can and can’t do for you, are getting buried under marketing.

If you’re trying to figure out which AI tool you need (or what your company is actually building), the labels are useless. What matters is the underlying architecture.

This post cuts through it. By the end, you’ll know the real difference between a chatbot, a copilot, and an AI agent, not by slogan but by what each one is structurally capable of. And you’ll know how to tell them apart when a vendor calls their chatbot an agent.

The short answer

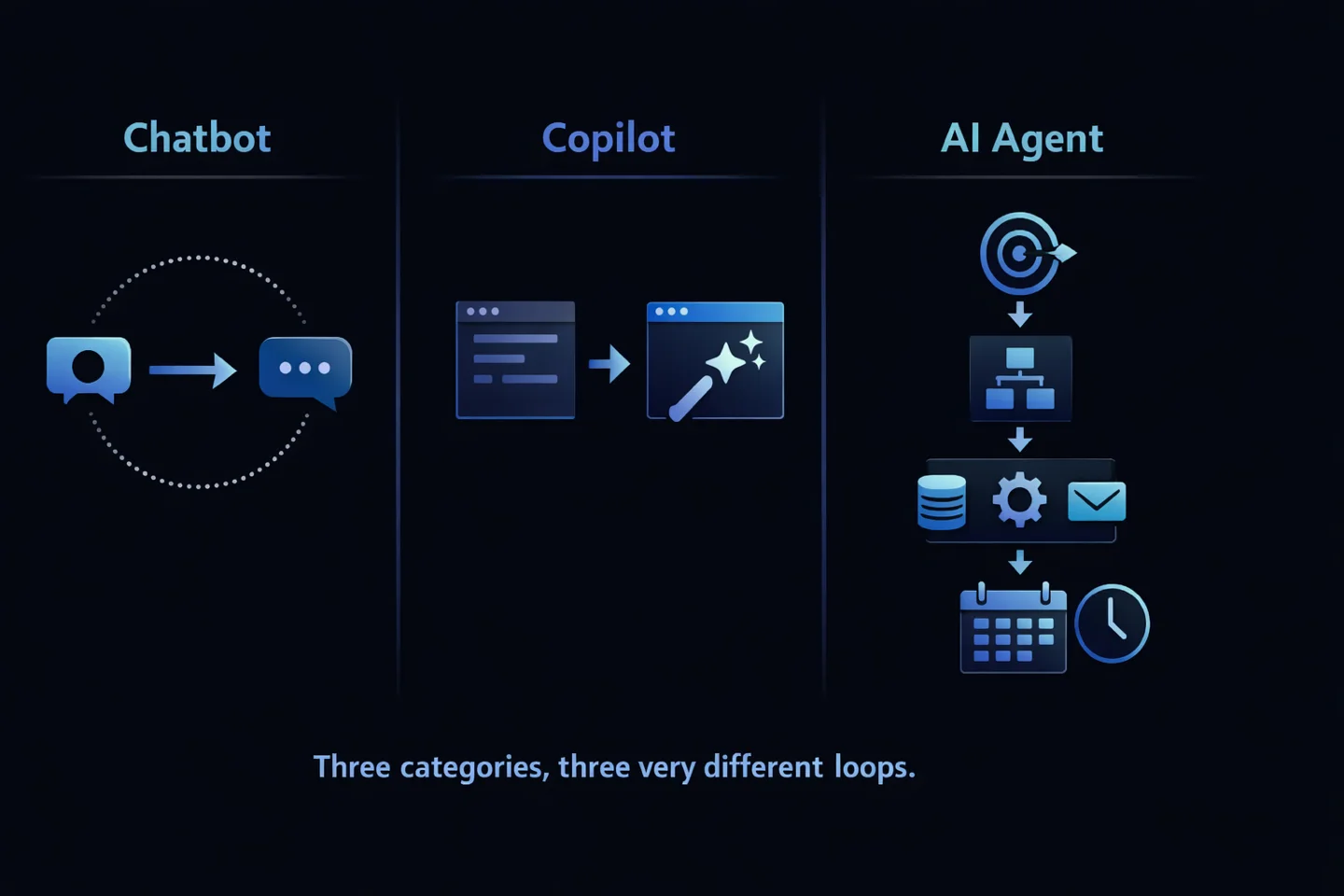

- A chatbot responds to messages.

- A copilot assists you inside another application.

- An AI agent takes a goal, plans, uses tools, and works with some autonomy, often across time and systems.

The differences compound fast. Let’s break down each one.

What is a chatbot?

A chatbot is a conversational interface. You send a message, it sends a response, the conversation continues. That’s the whole thing.

The modern chatbot is a thin application wrapper around a large language model. The model itself is stateless (no memory between calls) and the chatbot layer keeps the conversation coherent by resending the chat history with every new message. When the session ends, that history disappears unless the product explicitly saves it.

What chatbots are good at

- Answering a question

- Drafting an email or paragraph

- Explaining a concept

- Summarizing text you paste in

- Brainstorming ideas

What chatbots fundamentally can’t do

- Take action in other systems without being embedded in them

- Remember you across sessions (beyond the short “sticky-note” memory some of them ship)

- Run unattended

- Chain multiple tools together to achieve a goal

Examples: ChatGPT’s default chat UI, Claude.ai, Gemini’s chat interface, the customer-service bot on a SaaS website, Intercom’s Fin.

If a product’s primary UI is “type a message, get a message back,” it’s a chatbot, regardless of how smart the response sounds.

What is a copilot?

A copilot is an AI assistant embedded inside another application to help you do a specific task faster. It’s not a separate destination. It lives where the work is already happening.

The key structural difference from a chatbot: a copilot has context scoped to the host app. GitHub Copilot sees the code you’re writing. Microsoft 365 Copilot sees the document open in Word or the spreadsheet open in Excel. Gmail’s Smart Compose sees the email thread. This context is what lets the copilot make suggestions that are immediately useful.

But the copilot doesn’t leave the host app, and it doesn’t act without you. It suggests; you accept, reject, or edit.

What copilots are good at

- Auto-completing code, emails, documents

- Summarizing what’s already in front of you

- Suggesting the next step in a workflow you’re actively doing

- Drafting content you’ll edit and approve

What copilots don’t do

- Act autonomously outside their host application

- Use tools beyond what the host provides

- Persist long-term memory about you beyond that app

- Run in the background when the app is closed

Examples: GitHub Copilot, Microsoft 365 Copilot, Notion AI, Cursor, Gmail Smart Compose, Figma’s AI features.

The telltale sign of a copilot is that it doesn’t exist on its own. Close the host app, and the copilot is gone.

What is an AI agent?

AI agent is the most abused term in the industry right now, which makes the real definition worth being careful about.

A genuine AI agent has three things that chatbots and copilots lack:

1. Goal-orientation. You give it an objective, not a prompt. “Monitor this competitor’s blog and send me a digest every Friday” is a goal. “Write a blog post about competitors” is a prompt. A chatbot handles the second. Only an agent handles the first.

2. Tool use and real action. It uses actual tools: a browser, a file system, APIs, an email client, a scheduled job runner. It doesn’t just describe what should happen. It makes it happen.

3. Autonomy and persistence. It runs on its own once configured. It remembers context across sessions and channels. It initiates work rather than waiting to be asked.

A quick mental test: if you close your laptop, does the system keep working on the things you’ve assigned it? If yes, it’s probably an agent. If no, it’s a chatbot or copilot in better packaging.

What agents are built for

- Multi-step workflows (research, then summarize, then send)

- Recurring tasks (weekly reports, inbox sweeps, competitor monitors)

- Multi-system work (read a PDF, extract data, update a sheet, notify on Slack)

- Long-running relationships (remembering decisions and preferences over months)

Examples: Brainmox (persistent personal-assistant agent with tools and multi-channel presence), AutoGPT (early autonomous agent), OpenAI’s Operator (browser agent), Claude Code (coding agent), n8n with AI nodes (workflow agent).

The line between “agent” and “chatbot with tool calling” gets blurry in the market. The cleanest test: does it have persistent memory, can it run unattended, and can it use multiple tools to complete a goal without being prompted step-by-step? Three yeses and you have a real agent.

Side-by-side: the differences that matter

| Property | Chatbot | Copilot | AI Agent |

|---|---|---|---|

| Primary interface | Chat window | Embedded in host app | Multi-channel, often headless |

| Memory | Session-only (or shallow) | Scoped to host app | Persistent, long-term |

| Autonomy | None, reactive | Low; suggests, you approve | High; takes action toward goals |

| Tool use | Limited or none | Tools of the host app | Real tools (browser, files, APIs, scheduler) |

| Works when you’re offline? | No | No | Yes |

| Can initiate work? | No | No; responds to your activity | Yes; scheduled or event-triggered |

| Typical task length | Seconds | Minutes | Minutes to hours |

| What breaks if you close the app? | Nothing; session ends | Everything; copilot is gone | Nothing; the agent keeps running |

The pattern: as you move from chatbot to copilot to agent, you gain memory, autonomy, tool access, and the ability to do work that doesn’t require you hovering.

You also inherit complexity, risk, and a real need for privacy, audit trails, and guardrails. Agents can do real things, which means an agent without good oversight can do real damage. This is why serious agent products invest heavily in audit logs, sandboxed execution, and loop prevention.

How to tell them apart in the wild

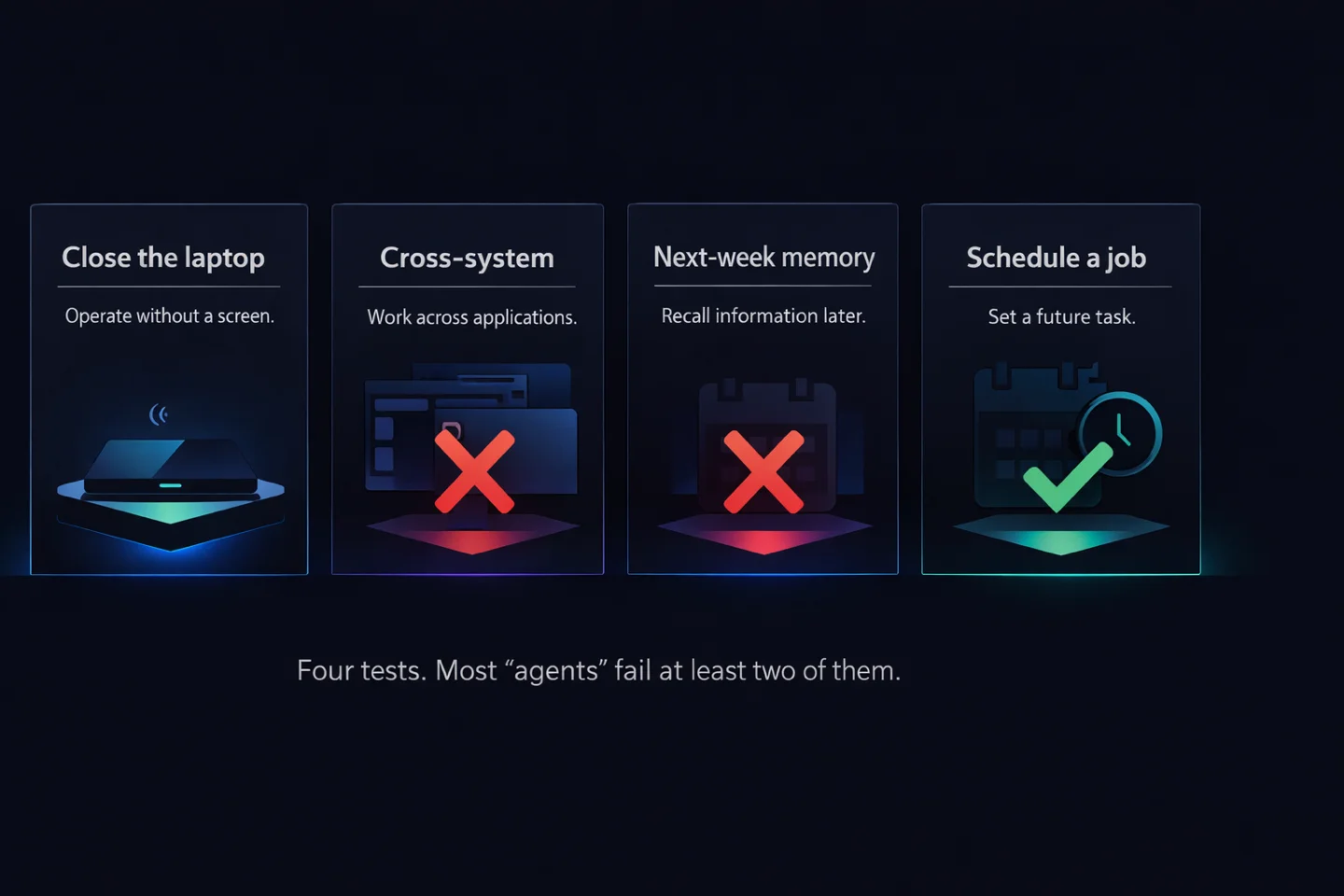

Vendors stretch definitions. Here’s a simple field test you can run on any AI product to see what it actually is.

The “close the laptop” test. Assign the tool a multi-hour task. Close your laptop. Come back in two hours. If work progressed, it’s an agent. If you come back to a chat waiting for your next message, it’s a chatbot or copilot.

The “cross-system” test. Ask the tool to read something in one system (a PDF on your desktop, say) and take action in another (send a Slack message based on it). If it can’t, it’s not an agent.

The “next-week memory” test. Have a substantive conversation today. Next week, open a new session and ask about what you discussed. If it remembers the context, not just a scraped fact or two, it has agent-level memory.

The “schedule a job” test. Ask it to ping you every Friday at 4 PM with a summary of the week’s work. If this isn’t a core feature, it’s not an agent.

Most products marketed as agents fail at least two of these tests. That doesn’t make them useless. It just means they’re chatbots or copilots wearing a new label.

Which one do you actually need?

Use a chatbot if: you want a brilliant writing and thinking partner for one-off tasks. ChatGPT and Claude are exceptional for this and probably always will be.

Use a copilot if: your work lives inside one or two applications (a code editor, an email client, a spreadsheet) and you mostly need faster drafting and better suggestions in place.

Use an agent if: your work spans multiple systems, recurs on a schedule, or requires the AI to remember context over weeks or months. Founders running businesses, consultants juggling clients, operators running repeatable workflows: this is where agents start to matter, and where chatbots start to break.

Most professionals end up using all three, for different jobs. The mistake is trying to use a chatbot for an agent’s work, or an agent for what a chatbot handles fine. They aren’t substitutes. They’re different categories with different strengths.

Where this is all heading

The industry is gradually collapsing chatbots and copilots into agents, because a capable agent can do everything the other two can do, plus keep working after you leave the room.

But the hard part of building an agent isn’t the language model. It’s the memory architecture, the tool sandbox, the scheduling system, the audit trail, and the multi-channel plumbing. Those are engineering problems, not prompt-engineering problems. The products that nail them will define the next five years of how work gets done.

At Brainmox, we’re building exactly one thing: an AI agent you can hire, install, and trust with recurring work. Persistent memory, real tools, multi-channel presence, and a privacy-first architecture that keeps your data on your machine, not someone else’s cloud.

If you’ve outgrown chatbots and want an assistant that works when you’re not watching, we’d like to meet you.