Every Monday morning, millions of founders open an AI chat tool and start typing the same sentence: “I’m working on a B2B SaaS product called…”

They typed it last Monday too. And the Monday before that.

Each time, they’re re-explaining their company, their project, their preferences, the decision they already made last week. The assistant, however smart it sounds, has forgotten all of it.

This is the paradox of AI in 2026. The models are brilliant. They can reason about contract law, debug Python, and write a decent investor email. But they can’t remember you from one day to the next. You’re not talking to an assistant. You’re running the same onboarding script every morning with a new temp.

This post is about why that happens, what memory actually means (because every AI company is now claiming it), and what real persistent memory looks like when an AI is built to work alongside you, not just chat with you.

Why AI forgets: the stateless problem

Most people don’t realize this about ChatGPT, Claude, Gemini, and every other chat AI: they are stateless by design.

That’s a technical word with a simple meaning. Every time you send a message, the AI processes it from scratch. No memory of what happened five minutes ago, yesterday, or last year. The model itself has no idea who you are.

What keeps a conversation coherent within a single session is a trick called the context window. The chat app quietly re-sends your entire conversation history with every new message, so the model can “remember” by re-reading. When you close the tab, when the session ends, when you start a new chat, the window resets. Everything inside it disappears.

This is how the systems are designed to work at massive scale. Statelessness is exactly what lets OpenAI serve a billion users a week without each one consuming dedicated resources.

The upside: the model is fast and cheap. The downside: it has no relationship with you.

Most AI companies have tried to paper over this with features they call “memory.” ChatGPT’s memory. Claude’s Projects. Gemini’s personalized context. These are improvements, but most of them are a thin layer on top of a fundamentally forgetful system.

Understanding the difference between the layers is how you tell a toy from a tool.

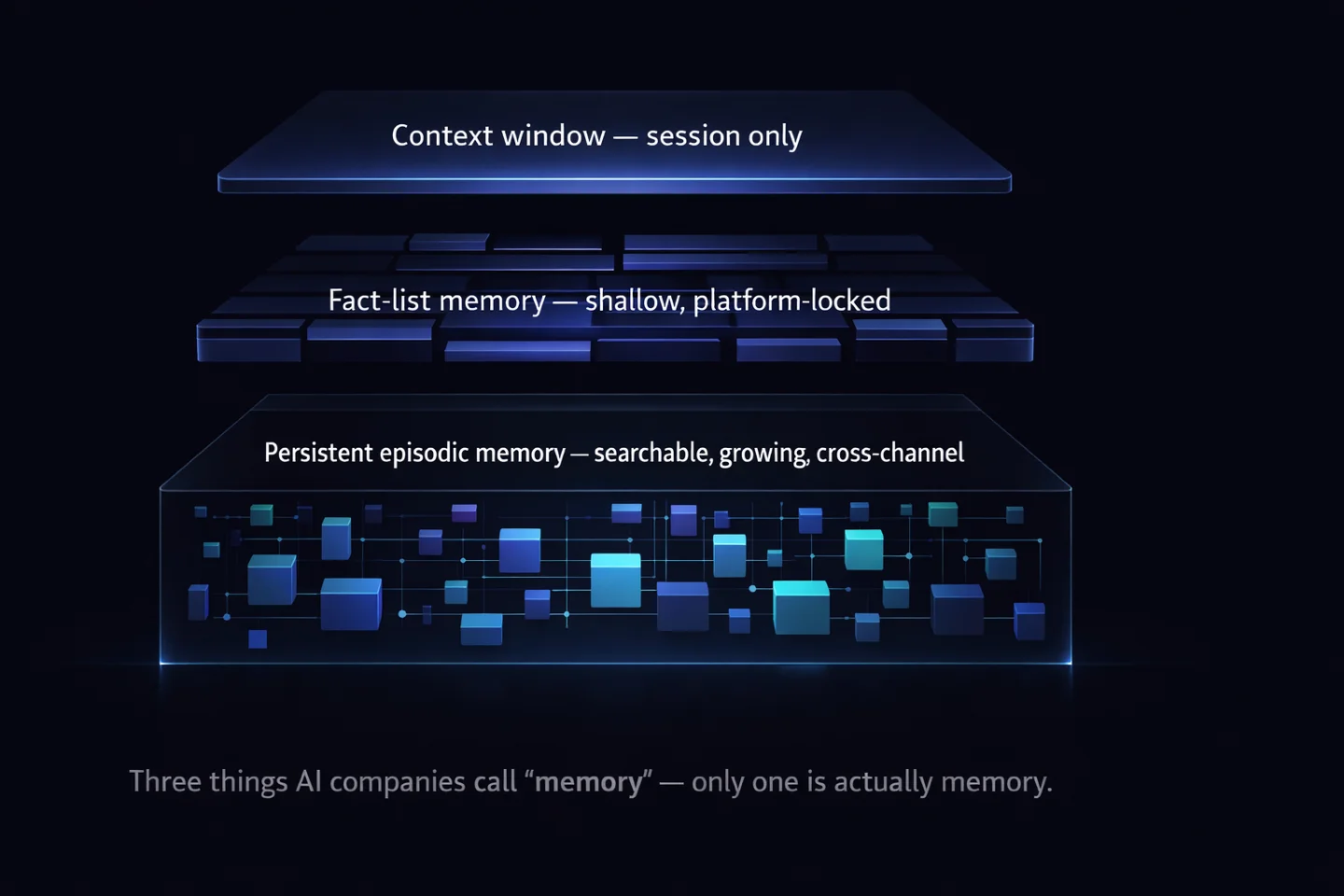

The three kinds of “memory” AI companies talk about

When someone says “this AI has memory,” they could mean three very different things.

1. Context-window memory (minutes to hours). This is the memory that keeps a single conversation coherent. When you reference something you said three messages ago, the AI can respond sensibly because the whole conversation is in its working memory. Every AI tool has this. It vanishes the moment the session ends.

2. Fact-list memory (the “sticky notes” approach). This is what most AI companies mean when they added a memory feature in the last two years. ChatGPT’s memory is the best-known example: the model quietly scrapes notable facts from your conversations (“user is based in Toronto,” “user prefers short responses”) and saves them to a short list. Every new conversation starts with that list prepended.

This is better than nothing, but it’s shallow. The list is short. The facts are stripped of context. The AI remembers that you decided something, without the conversation where you decided it, without the trade-offs you weighed, without the concern you raised. And crucially, that memory is locked to that platform. Your ChatGPT memory doesn’t follow you to Claude, or to any other tool you might use tomorrow.

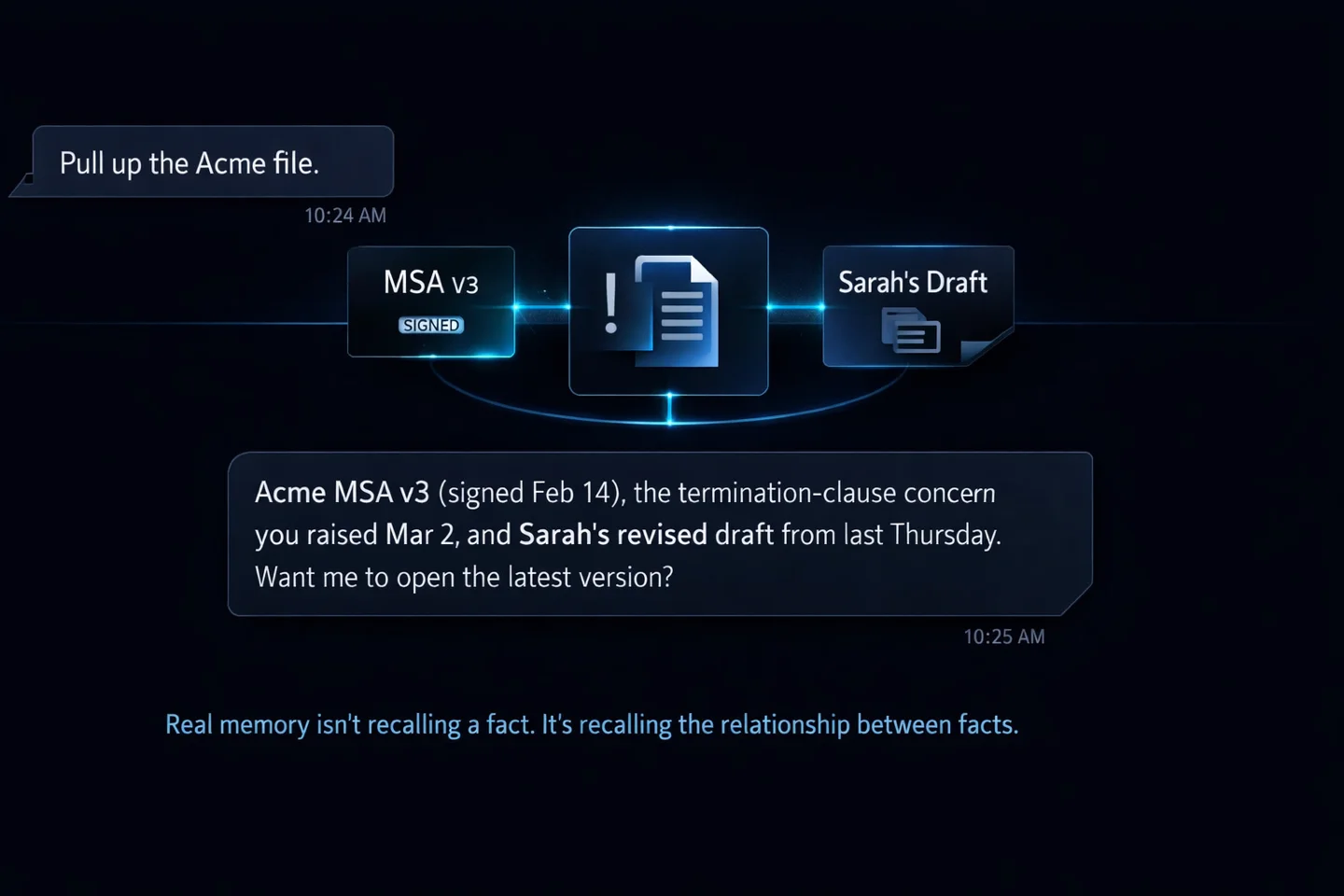

3. Persistent episodic memory (a real, growing knowledge of you). This is what humans have, and it’s what real AI co-workers need. Not a list of facts, but a structured, searchable, growing record of every interaction, every document, every decision, every preference, indexed and retrievable by relevance.

When you mention “the Acme contract,” a real-memory AI doesn’t just recognize the name. It pulls up the PDF you shared in February, the revision you made in March, the concern you raised about the termination clause, and the reply your lawyer sent. That’s context. That’s memory.

Under the hood, this kind of memory is built on vector databases, retrieval systems, and carefully designed storage. The implementation isn’t trivial, which is why most AI chat tools haven’t shipped it. It’s expensive, it requires thoughtful privacy design, and it doesn’t scale the way statelessness does. But for anyone using AI to actually work, not just chat, it’s the only kind that matters.

What real memory looks like in practice

A real-memory AI changes how you work in ways that are hard to appreciate until you’ve experienced them for a few weeks.

You stop repeating yourself. You mention your company name once, your tone preferences once, your clients once, your projects once. From then on, the assistant knows. You don’t open every conversation with an onboarding monologue.

Decisions stick. Last month, you decided to raise the pricing on your enterprise tier. You told your assistant. Three weeks later, when drafting a proposal, it uses the new pricing (not the old one) because it remembers the decision and the reasoning behind it.

Context accumulates. Drop a contract in January. Mention a concern about it in February. Ask for a revision in March. By April, your assistant has the full history and can answer, “Why did we change that clause?” without you re-explaining anything.

Preferences stop being prompts. You don’t need to say “write in my voice, be concise, don’t use emoji, avoid the word ’leverage’” every single time. After a few corrections, the assistant learns. After a few months, it knows your voice better than most of your team does.

Ongoing work has continuity. A weekly report, a Friday digest, a monthly review: none of it starts from zero. Your assistant remembers last week’s version and builds on it, flags what’s changed, and asks the right questions about the gaps.

The result is subtle but transformative: you stop managing the tool and start working with it.

The memory test: 5 questions to ask any AI tool

Before you commit to any AI tool that claims to have memory, run it through five questions.

1. Does it remember me next week? Close the session. Wait seven days. Open a fresh conversation. Does the AI know who you are and what you’ve been working on, or does it start from zero?

2. Does it remember the context, or just a flattened fact? Ask it why you made a decision two weeks ago. If it can reconstruct the reasoning, it has real memory. If it only recites the outcome, it’s working from a sticky note.

3. Can I see what it remembers? A real memory system is inspectable. You should be able to see what’s stored, correct mistakes, and delete anything you want gone. If memory is a black box, it’s also a black hole.

4. Is the memory searchable? Can you ask “when did we last discuss the Acme renewal?” and get an answer? Good memory is retrievable, not just archived.

5. Does the memory follow the assistant across channels? If you tell your AI something on desktop, does it know it when you message via Slack or Telegram? Channel-split memory is barely memory at all.

If a tool fails three or more of these, what it has is a conversation feature, not a memory system. That’s fine for drafting an email. It won’t carry a real working relationship.

Why this matters

Memory is the single biggest reason AI tools feel impressive in a demo and disappointing in a workweek. Demos are short. Workweeks are long. What makes an assistant valuable over weeks and months is exactly what statelessness doesn’t allow: accumulation.

The good news: this problem is solvable. Persistent memory, inspectable storage, cross-channel continuity, and privacy-first design are all possible today. They’re just not how most AI tools were built, because most AI tools were built to serve a billion anonymous sessions, not to work for you over a period of years.

At Brainmox, persistent memory is the foundation the whole product sits on. Every conversation, every file, every decision stays with your assistant. Tell them once. Share context once. They build on it forever.

That’s what we think an AI professional should feel like. Not a stranger every Monday, but the colleague who was paying attention last week.